MediaPipe 파헤치기 (4)

해당 글은 본인이 공부하면서 파악하기 위해서 작성한 글입니다. 잘못된 정보나 추가적인 정보가 들어가야 한다면 댓글로 알려주시면 감사하겠습니다!

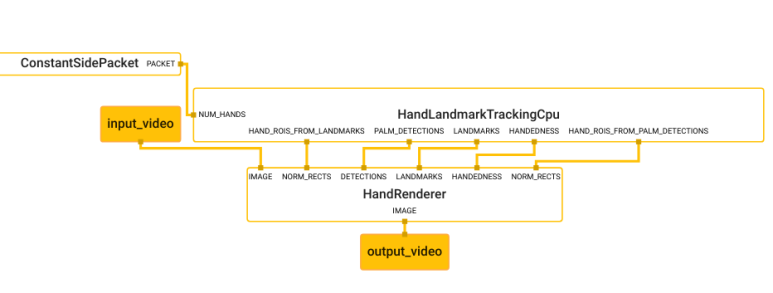

저번 포스팅에서는 hand renderer를 살펴 보았습니다.

이번에는 저번 포스팅에서 살펴보지 못했던 HandLandmarkTrackingCpu를 살펴 보겠습니다.

//mediapipe/modules/hand_landmark:hand_landmark_tracking_cpu

해당 path로 가서 BUILD 파일을 살펴봅니다.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

mediapipe_simple_subgraph(

name = "hand_landmark_tracking_cpu",

graph = "hand_landmark_tracking_cpu.pbtxt",

register_as = "HandLandmarkTrackingCpu",

deps = [

":hand_landmark_cpu",

":hand_landmark_landmarks_to_roi",

":palm_detection_detection_to_roi",

"//mediapipe/calculators/core:begin_loop_calculator",

"//mediapipe/calculators/core:clip_vector_size_calculator",

"//mediapipe/calculators/core:constant_side_packet_calculator",

"//mediapipe/calculators/core:end_loop_calculator",

"//mediapipe/calculators/core:flow_limiter_calculator",

"//mediapipe/calculators/core:gate_calculator",

"//mediapipe/calculators/core:previous_loopback_calculator",

"//mediapipe/calculators/image:image_properties_calculator",

"//mediapipe/calculators/util:association_norm_rect_calculator",

"//mediapipe/calculators/util:collection_has_min_size_calculator",

"//mediapipe/calculators/util:filter_collection_calculator",

"//mediapipe/modules/palm_detection:palm_detection_cpu",

],

)

해당 target은 mediapipe_simple_subgraph로 되어있습니다.

Subgraph는 graph에서 구성 요소로 들어갈 수 있는 graph 입니다. graph를 모듈화 할 수 있습니다.

(자세한 정보는 여기에 설명 되어있습니다.)

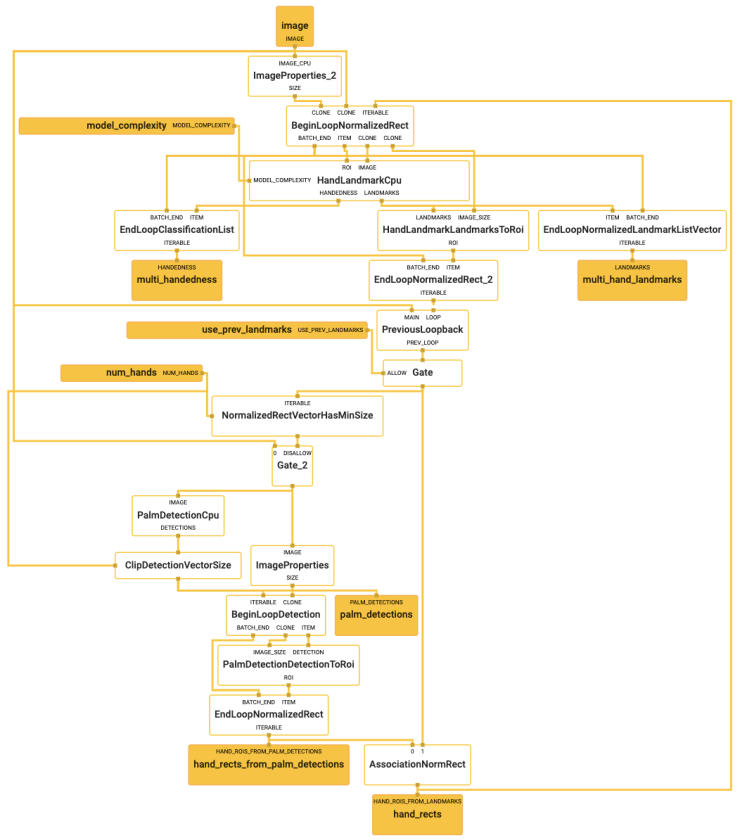

graph 속성에 hand_landmark_tracking_cpu.pbtxt 가 주어져 있으니 해당 graph를 살펴보겠습니다.

허걱… 딱봐도 엄청 복잡해 보입니다.

친절하게도 mediapipe에 올라와 있는 파일은 모두 주석이 정성스럽게 작성되어 있습니다. 우선 입출력 stream 부터 확인 해보겠습니다.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

# CPU image. (ImageFrame)

input_stream: "IMAGE:image"

# Max number of hands to detect/track. (int)

input_side_packet: "NUM_HANDS:num_hands"

# Complexity of the hand landmark model: 0 or 1. Landmark accuracy as well as

# inference latency generally go up with the model complexity. If unspecified,

# functions as set to 1. (int)

input_side_packet: "MODEL_COMPLEXITY:model_complexity"

# Whether landmarks on the previous image should be used to help localize

# landmarks on the current image. (bool)

input_side_packet: "USE_PREV_LANDMARKS:use_prev_landmarks"

# Collection of detected/predicted hands, each represented as a list of

# landmarks. (std::vector<NormalizedLandmarkList>)

# NOTE: there will not be an output packet in the LANDMARKS stream for this

# particular timestamp if none of hands detected. However, the MediaPipe

# framework will internally inform the downstream calculators of the absence of

# this packet so that they don't wait for it unnecessarily.

output_stream: "LANDMARKS:multi_hand_landmarks"

# Collection of handedness of the detected hands (i.e. is hand left or right),

# each represented as a Classification proto.

# Note that handedness is determined assuming the input image is mirrored,

# i.e., taken with a front-facing/selfie camera with images flipped

# horizontally.

output_stream: "HANDEDNESS:multi_handedness"

# Extra outputs (for debugging, for instance).

# Detected palms. (std::vector<Detection>)

output_stream: "PALM_DETECTIONS:palm_detections"

# Regions of interest calculated based on landmarks.

# (std::vector<NormalizedRect>)

output_stream: "HAND_ROIS_FROM_LANDMARKS:hand_rects"

# Regions of interest calculated based on palm detections.

# (std::vector<NormalizedRect>)

output_stream: "HAND_ROIS_FROM_PALM_DETECTIONS:hand_rects_from_palm_detections"

네…. 자세히 설명 되어있습니다…(귀찮…)

그럼 input_stream:”IMAGE:image” 부터 한번 순차적으로 따라가 보겠습니다.

This post is licensed under CC BY 4.0 by the author.